By Nils Krumrey, Global Customer Success Manager, Logpoint

When customers speak to us, the number one requirement on their list is often “log management.” That sounds reasonably straightforward, but if you want to do something more than a box ticking exercise, it’s worth diving into what log management means.

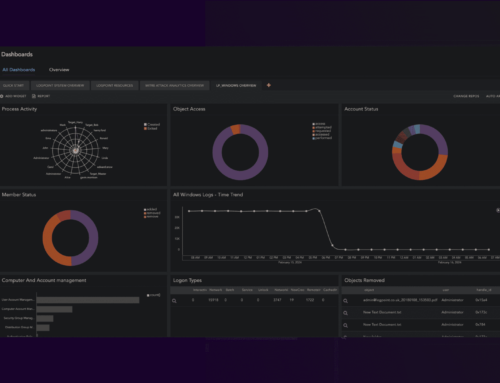

Logpoint Converged SIEM collects log data from endpoints, which are devices and applications across the entire IT infrastructure. Those logs are then transformed into high-quality data through normalization and correlations. Then the solution automatically identifies and sends alerts about incidents and abnormalities using machine learning algorithms.

What does logging mean?

Logging is the recording of an event. Any type of temporal event data (think event sequences or web log data) is a log. Traditionally, we think of logs as long, complicated text files, but today’s logs can come from virtually anywhere, in any form. A virus scanner that keeps track of client update events in its database? That’s a log. Network flow data from a Virtual Private Cloud in AWS a log. And of course, there are all the usual suspects like Windows Event Logs, web server logs, file access logs.

What is log management?

If we have logs, why do they need managing? The term “log management” was born out of a time when logs were mainly text files and administrators were wrestling with disk space, and log99 rolled over to log00. That is when logs needed to be “managed away” so that the source system could breathe again.

While text files made way for Syslog, APIs and databases, the simple log00 example is still very much the starting point for log management. Perhaps not so much in terms of disk space these days, but to ensure that we capture logs before they disappear. It is surprising how many systems still overwrite their logs and how quickly they do it. Often, logs only exist for 24 hours or less before they are overwritten and disappear. If we want to make sense of this log data later, we need to make sure that it is being collected and not lost.

Log collection

We have determined what a log message is and why they need capturing, but how do we make this work in a practical sense?

First, the log message needs to be collected. It either needs to be picked up, or something needs to drop it off at our doorstep. Log collection can be achieved for genuine log files by logging into a system and fetching it using SCP or SFTP.

Alternatively, the source system can be configured and drop off logs with the solution’s built-in FTP server regularly. Other than files, it could be Syslog data (widespread for network devices) or SNMP traps. In the world of Windows, it could be an agent, a Windows Event Forwarding Collector, or WMI. It could be ODBC for databases. The Office 365 Management API or Defender Alert API or EventHubs API. An S3 bucket.

Log collection methods are indeed endless. But it is vital that the log management solution supports the platforms and techniques you are using to generate log data. Otherwise, there will be blind spots.

Centralized log aggregation

One reason to collect logs is to make sure that they live somewhere safe and somewhere you can get to quickly. There’s little point in having log files in multiple places where they can be of no use whatsoever. . You need to know where they are and how to access them at any time, so centralized log aggregation is, therefore, a quick way of getting value

You must be able to deploy a solution efficiently, preferably at no extra cost, in distributed environments. For example, a remote site might produce a surprising number of log messages that would saturate the WAN link. On other cloud platforms, log egress and ingress might lead to prohibitive costs. In these scenarios, keeping logs stored locally or with them remaining in the cloud, but still being able to search through them remotely from a central Search Head, could be vital. Converged SIEM is flexible enough to adjust to your needs and environment.

Long-term log storage, log retention and log rotation

One decision every customer needs to make is how long to store log messages. There are a variety of considerations here:

- Compliance: are there any regulatory reasons why log messages need to be kept or indeed be discarded? PCI, GDPR and corporate policies come into play here, both for data that needs to be kept as well as data that needs to be discarded. Setting granular retention policies is beneficial. Perhaps specific log messages containing certain personally identifiable information should be kept for a shorter amount of time, or some of their fields should be encrypted.

- Operational needs: are there certain use cases where having a log message would be beneficial after the fact? For example, how long after the activity took place could there realistically (or legally) be a third-party copyright claim against a student downloading movies illegally on the university network?

- Log volumes and cost: log messages take up disk space, and disk space costs money. Some vendors even charge for the amount of data stored using their tools. That leads to a specific cost for every day that log messages are stored and retained. Ultimately, there comes the point where the cost of keeping logs stored for longer outweighs the benefits they might deliver. Then they should probably be discarded.

Choosing the right storage solution for log management

When it comes to the storage of logs itself, there are different ways of addressing it. Some cloud and managed services include all storage costs in a subscription price. Still, additional retention tends to be expensive. And there is little control over what gets stored where and for how long, with the data leaving the customer’s ownership.

With other solutions, data is owned by the customers who deploy their own storage tiers. Depending on the architecture, the answer might automatically move older data onto a slower storage tier (such as slower disk NAS rather than DAS/SAN and SSDs). Some vendors send log data to an offline or “archive” tier. Which is necessitating a slow restore process if the log data is actually needed.

As usual, a flexible solution gives the most control over log retention and costs. Maintaining ownership of data, storing different log messages for different amounts of times, protecting sensitive data while still being able to search through it, and having the solution manage storage tiers automatically should remove most of the challenges of an inflexible log management solution.

Log analysis, log search and reporting – Why would you use log management?

So, where does that leave us? We have collected logs and we have ensured they are stored centrally and for the right amount of time. But, after all this work, it certainly makes sense to actually use them for something!

This is where mere log management ties into Converged SIEM (Security Information and Event Management). While security is an important focus area, with SIEM, any event from a log file can be found, alerted on, and visualized through dashboards and reports.

So, collecting and storing logs is a significant first step. Sometimes, collecting and storing logs is all that is needed to address some compliance requirements. However, the real value starts to emerge from all the things you can do with the logs. For example, log analysis.

With Converged SIEM, you get access to the full power of logs. All log messages are centrally available immediately. This means that you will always know where to troubleshoot. No matter if it’s network connectivity issues, virus scanner alerts, users that keep locking themselves out, and all manner of other things that would otherwise have required manually hunting through hard-to-read log files from a multitude of systems- if they even are still available.

For even more advanced functionality that goes beyond log management tools, make sure to check out some of the other blogs on the Logpoint site!